How to Download a Kaggle Dataset Directly to a Google Colab Notebook

[ad_1]

Kaggle is a popular data science-based competition platform that has a large online community of data scientists and machine learning engineers.

The platform contains a ton of datasets and notebooks that you can use to learn and practice your data science and machine learning skills. They even have competitions you can participate in.

Kaggle offers a 100% free platform for all users – but there are some restrictions depending on the resources you’re using.

For example, you can use their CPU system for an unlimited amount of time. But there are strict limitations on GPU and TPU usage. You can use their GPU for 30 hours and TPU for 20 hours in a week. It gets resets each week, and then you get a fresh 30 hours GPU usage and 20 hours TPU usage at the start of the new week.

Alongside Kaggle, there are another popular platforms for machine learning engineers and data scientists – like Google Colaboratory, or Google Colab for short.

In Google Colab, you can also use their CPU and GPU, but the free versions have more limitations than the free Kaggle account. In Google Colab, you can not get any GPU computational power until they allocate it from their free units. You don’t know how many hours you can use, and you don’t even know if you have any chance to get units over the next few days.

In order to get all the features, you need to subscribe to their pro plans which are quite expensive.

But sometimes you still may want to use Colab, in most cases for short tasks. In Colab, you can directly connect your Google Drive and use your datasets from there. You can also store your output from the notebook to Google Drive if you want.

When you’re working on a project, though, sometimes you’ll want to use datasets from Kaggle in Google Colab. So you’ll need to download the dataset from Kaggle and upload that to Colab’s temporary storage or your Google Drive.

You can probably guess that this is a very time-consuming process.

But there is a way that you can directly download a Kaggle dataset using an API call in the Google Colab’s notebook! In this article, I am going to show you how you can do that.

Table of Contents

I’ve broken this tutorial down into separate parts for better understanding. You can get a clear overview of the entire article here:

Video

If you would like to watch all of the steps from a video, you’re in luck – I made this video just for you:

Types of Kaggle Datasets

Normally Kaggle provides two types of datasets: typical datasets that anyone can upload, and competition datasets. In the competition datasets, the competition organizers typically add/upload the datasets.

Even though you can download a Kaggle dataset easily, you can’t download a competition dataset if you don’t participate in that competition. But some competitions remain open, and you can access their datasets via “Late Submission”. So just make sure to check.

Prerequisites

To go through this tutorial and get the most ouf of it, you’ll need a Kaggle account, and that is completely free. Simply head over to the official website of Kaggle, and create an account if you don’t have one already.

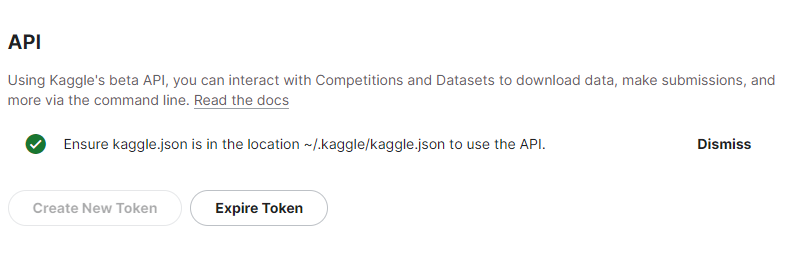

You’ll also need Kaggle’s API. Head over to the settings of your Kaggle account. Go to the API section, and click “Create New Token”. Keep in mind that Kaggle does not allow you to keep multiple tokens. You can use only one active token for your Kaggle account.

This will give you a kaggle.json file. Keep it safe, as you’ll need to use it later.

You also need a Google account if you want to use Google Colab. You may already have one, but if you don’t, go ahead and create a new account in Google.

Now, you can store your Kaggle JSON in your Google drive. I prefer to create a new folder and keep my JSON file there so that I can call that in Colab whenever I want.

How to Setup Google Colab to Use the Kaggle API

You can simply open any Colab notebook where you want to use the Kaggle API to download the dataset.

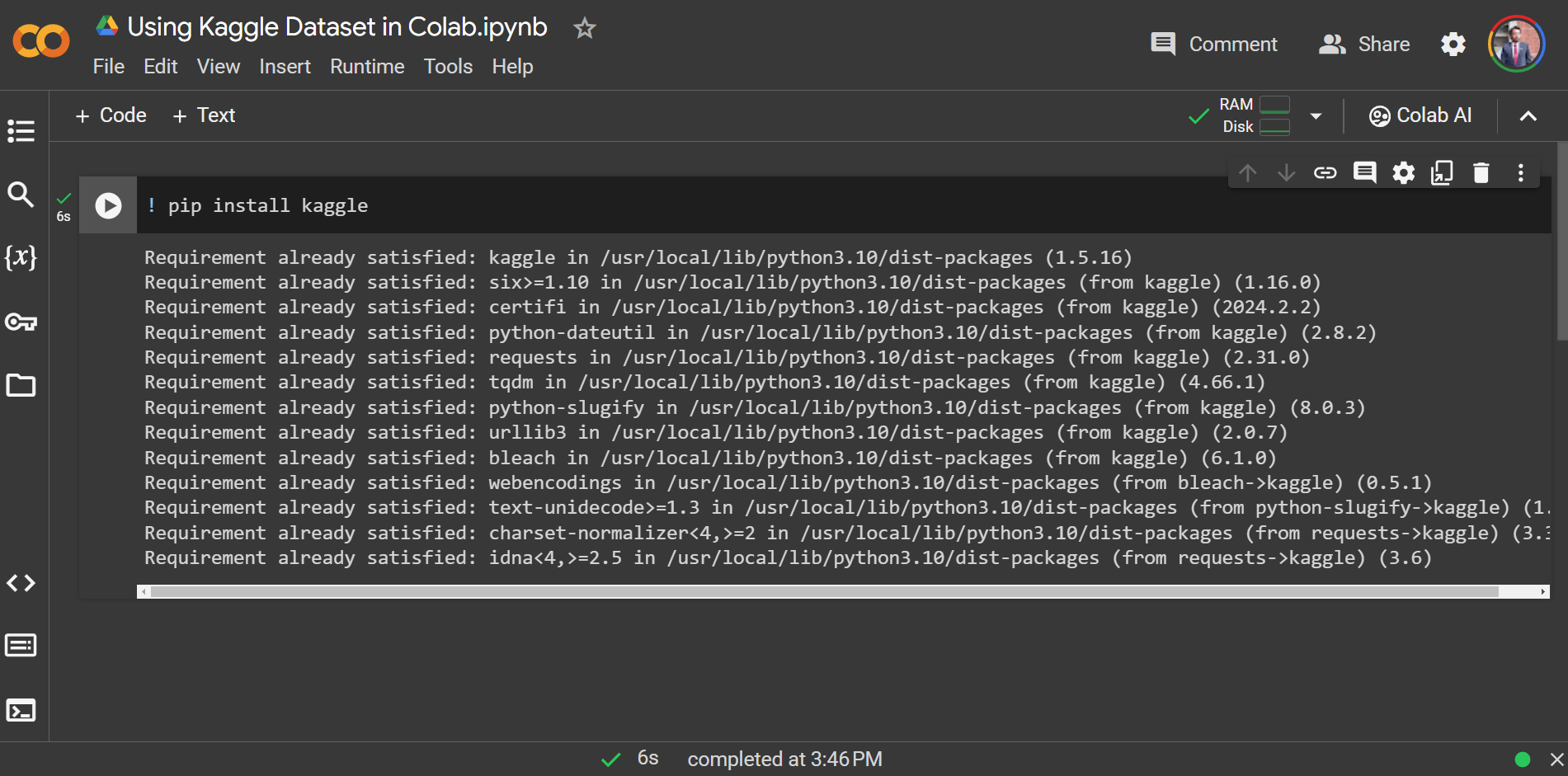

Install the Kaggle library

You need to install the Kaggle Python library before you start working with Kaggle. You can simply install it in the colab notebook using the command ! pip install kaggle.

Mount Google Drive to Colab

Now you need to mount your Google Drive to the Colab notebook, since you’ve uploaded your kaggle.json file inside your Google drive.

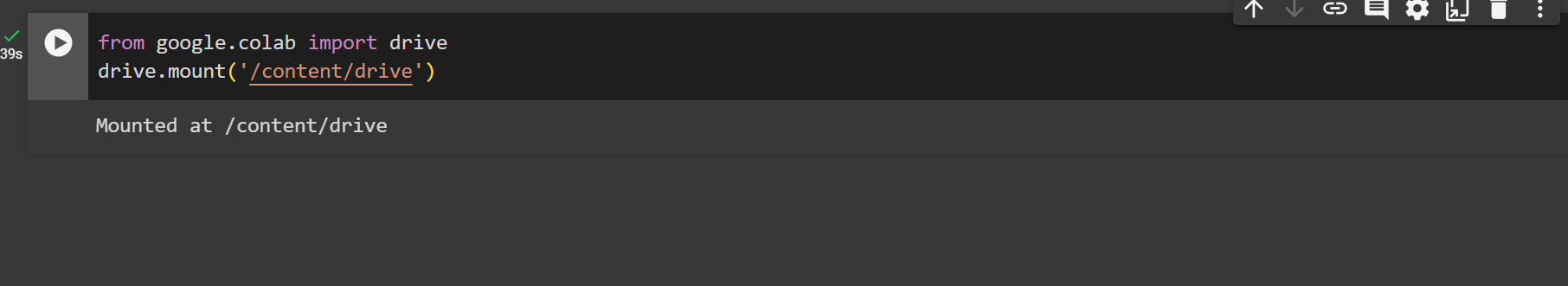

You can simply do that by using the two lines of code given below:

from google.colab import drive

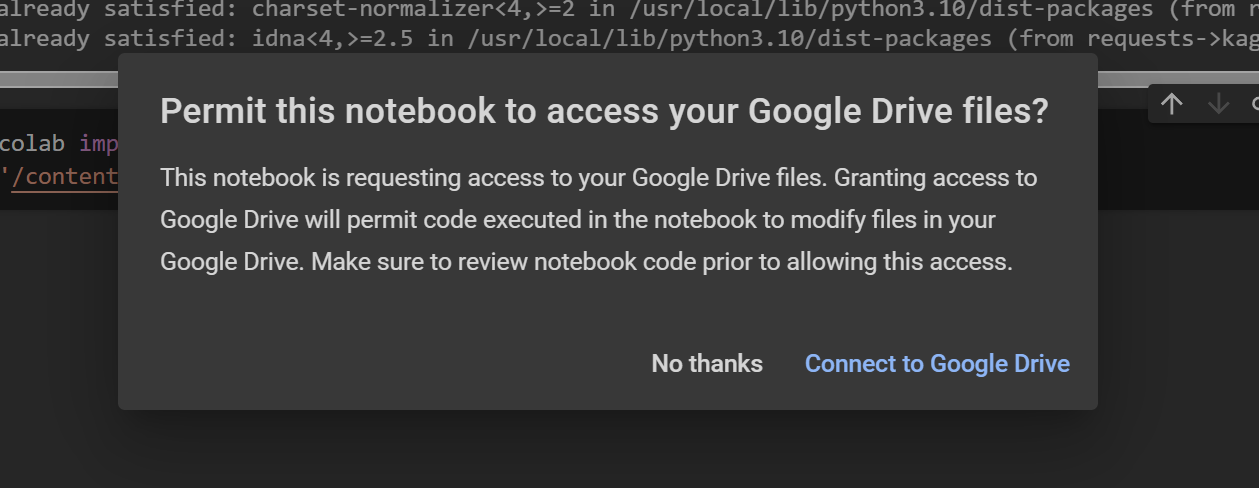

drive.mount('/content/drive')Make sure to give it permission to access your Google Drive:

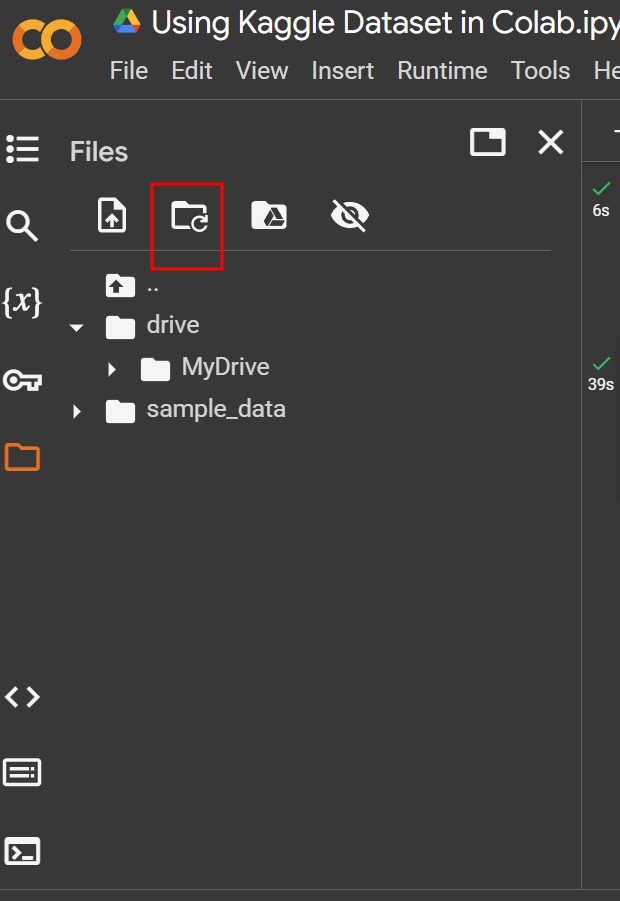

If you refresh the mounted folder icon, you will see your Google Drive and all of the content in the notebook.

Add the Kaggle API Token to the Colab Notebook

Now you need to add the Kaggle API token to the notebook. But before that, you can simply create a temporary directory for Kaggle at the temporary instance location on the Colab drive by using the command ! mkdir ~/.kaggle.

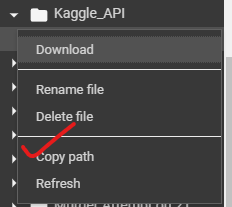

Now you need to copy your uploaded JSON file to that temporary Kaggle directory. You need the URL where you uploaded your JSON file earlier. You can grab that link directly from the drive folder in the notebook.

You can get the path directly like this.

Then you can use the copy command like below:

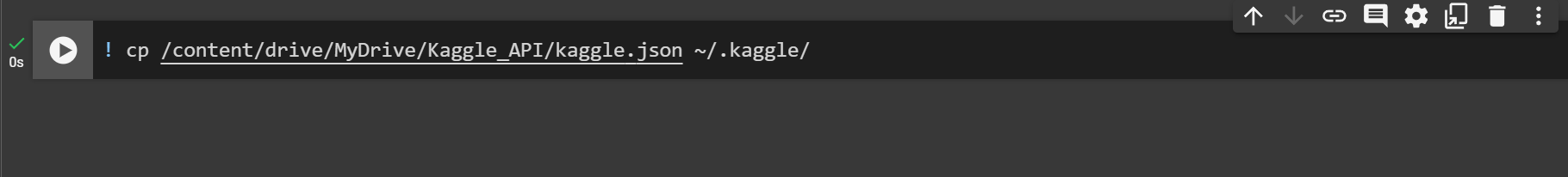

! cp kaggle_json_path ~/.kaggle/For example, my JSON file is located at “/content/drive/MyDrive/Kaggle_API/kaggle.json”, so my command would be:

! cp /content/drive/MyDrive/Kaggle_API/kaggle.json ~/.kaggle/

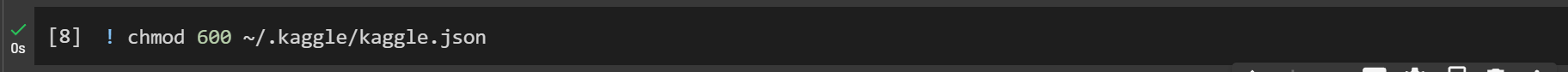

Now you need to change the file permissions to read/write to the owner only for safety.

You can use the command below to achive that:

! chmod 600 ~/.kaggle/kaggle.json

How to Download the Kaggle Dataset

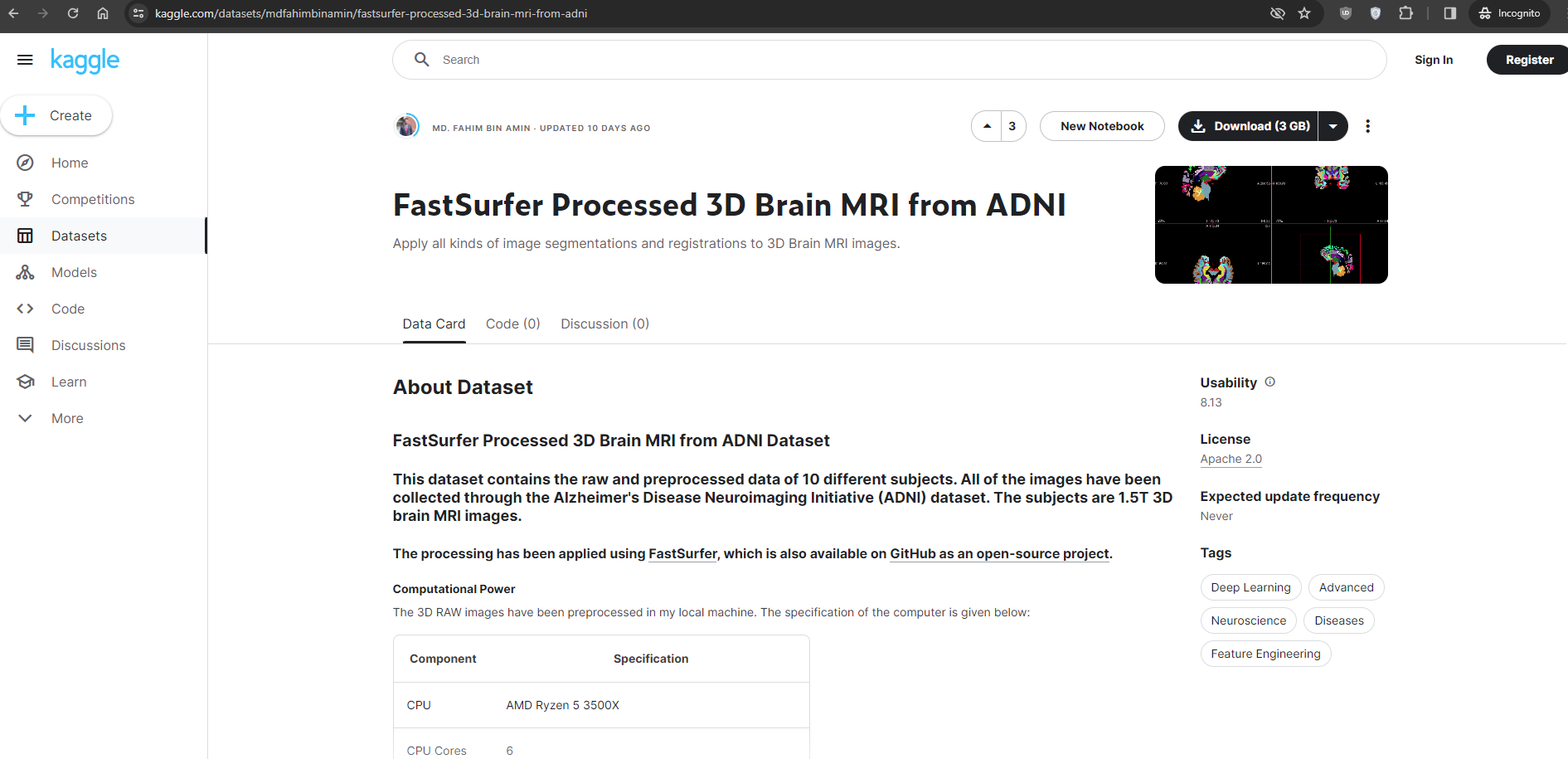

For downloading a typical Kaggle dataset, you have to find the dataset on Kaggle first.

Let’s say I want to download the following dataset from Kaggle:

Check the complete URL of the dataset, which in this case is:

https://www.kaggle.com/datasets/mdfahimbinamin/fastsurfer-processed-3d-brain-mri-from-adni

FastSurfer Processed 3D Brain MRI from ADNI

# FastSurfer Processed 3D Brain MRI from ADNI Dataset ## This dataset contains the raw and preprocessed data of 10 different subjects. All of the images have been collected through the Alzheimer’s Disease Neuroimaging Initiative (ADNI) dataset. The subjects are 1.5T 3D brain MRI images. ### The pr…

We need the “account_name_of_the_dataset_owner/dataset_path” string. From the URL, the account name of the dataset owner is mdfahimbinamin. The dataset path is fastsurfer-processed-3d-brain-mri-from-adni.

So to download this exact dataset from Kaggle to your Google colab, your command would be:

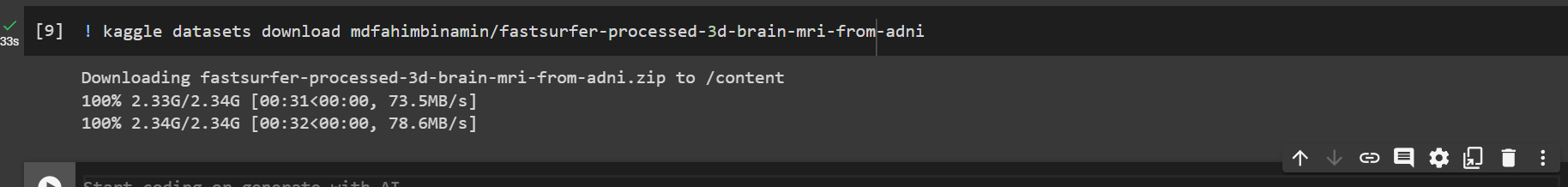

! kaggle datasets download mdfahimbinamin/fastsurfer-processed-3d-brain-mri-from-adni

The entire process happens on Google’s Cloud PC. So the downloading speed should be quite fast.

By default, the datasets come as .zip file. So if you need to unzip that, you can simply use the command below:

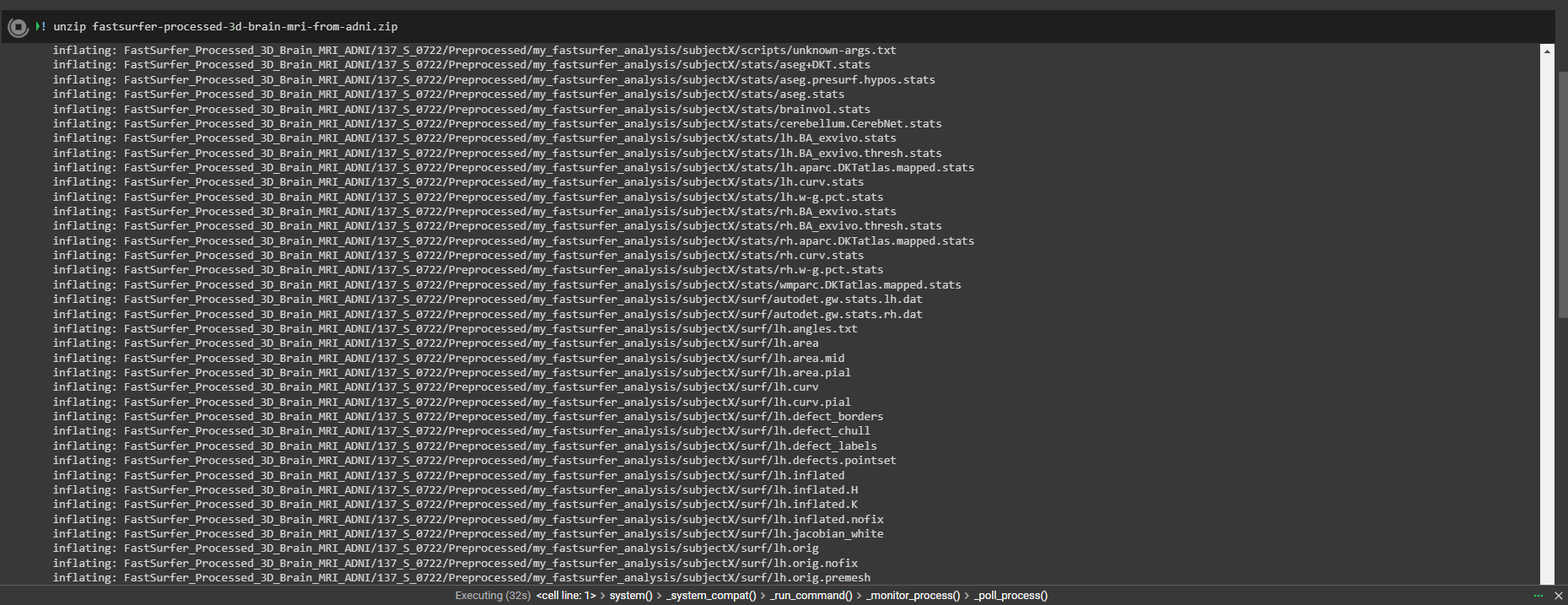

! unzip dataset-path.zipFor example, my dataset name/path was “fastsurfer-processed-3d-brain-mri-from-adni”. So I will use the following command:

! unzip fastsurfer-processed-3d-brain-mri-from-adni.zip

That’s it! 😊

How to Download a Kaggle Competition Dataset

Before downloading a Competition dataset, you need to make sure that either you have joined that competition or that you’ve selected “Late Submission” using the same Kaggle account that you’re using for Kaggle API token.

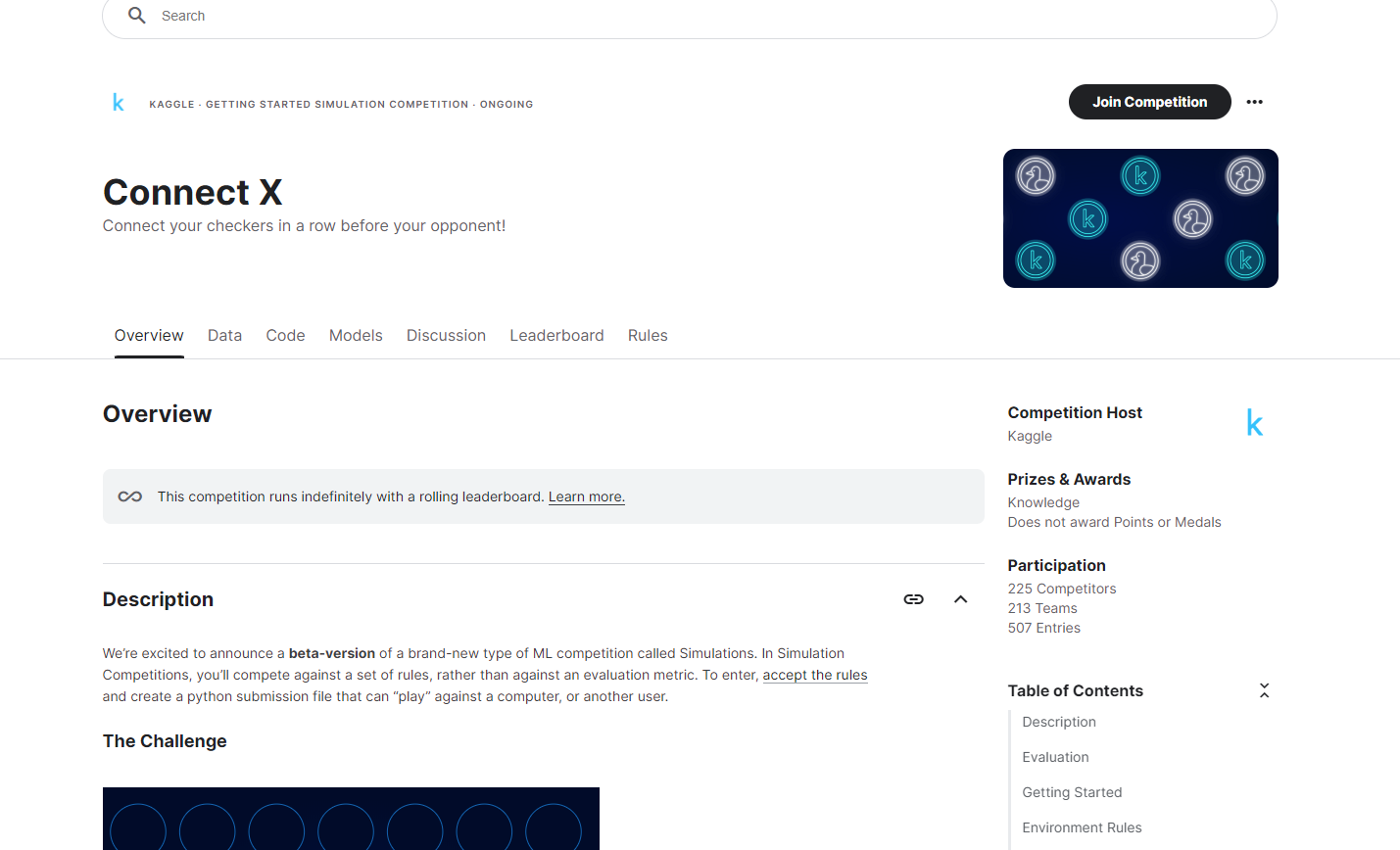

Suppose I’m joining the ConnectX competition on Kaggle.

I need to click “Join Competition” to get access to their dataset.

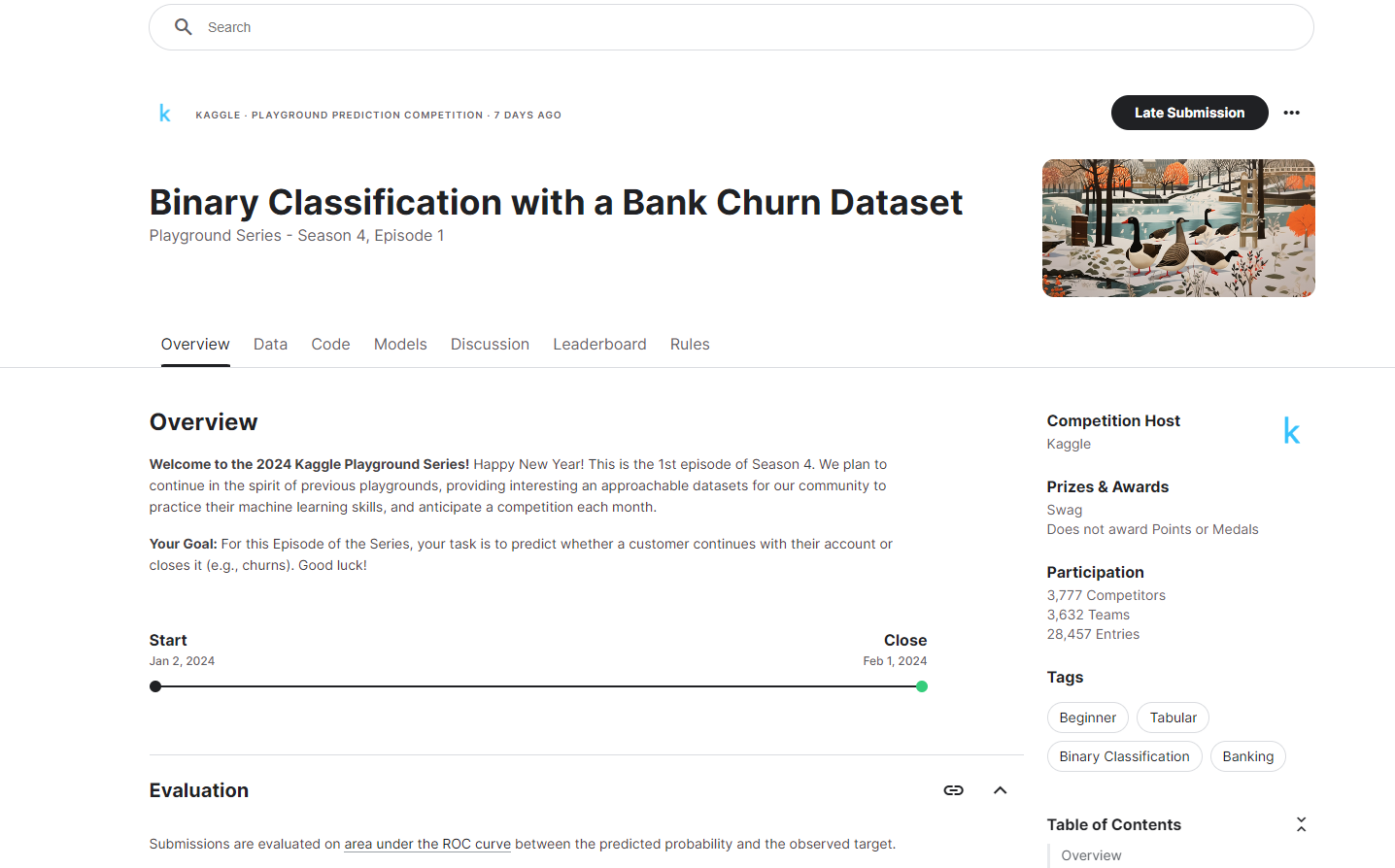

But if I want to download a dataset from a past competition, I need to join their “Late Submission” to gain their dataset.

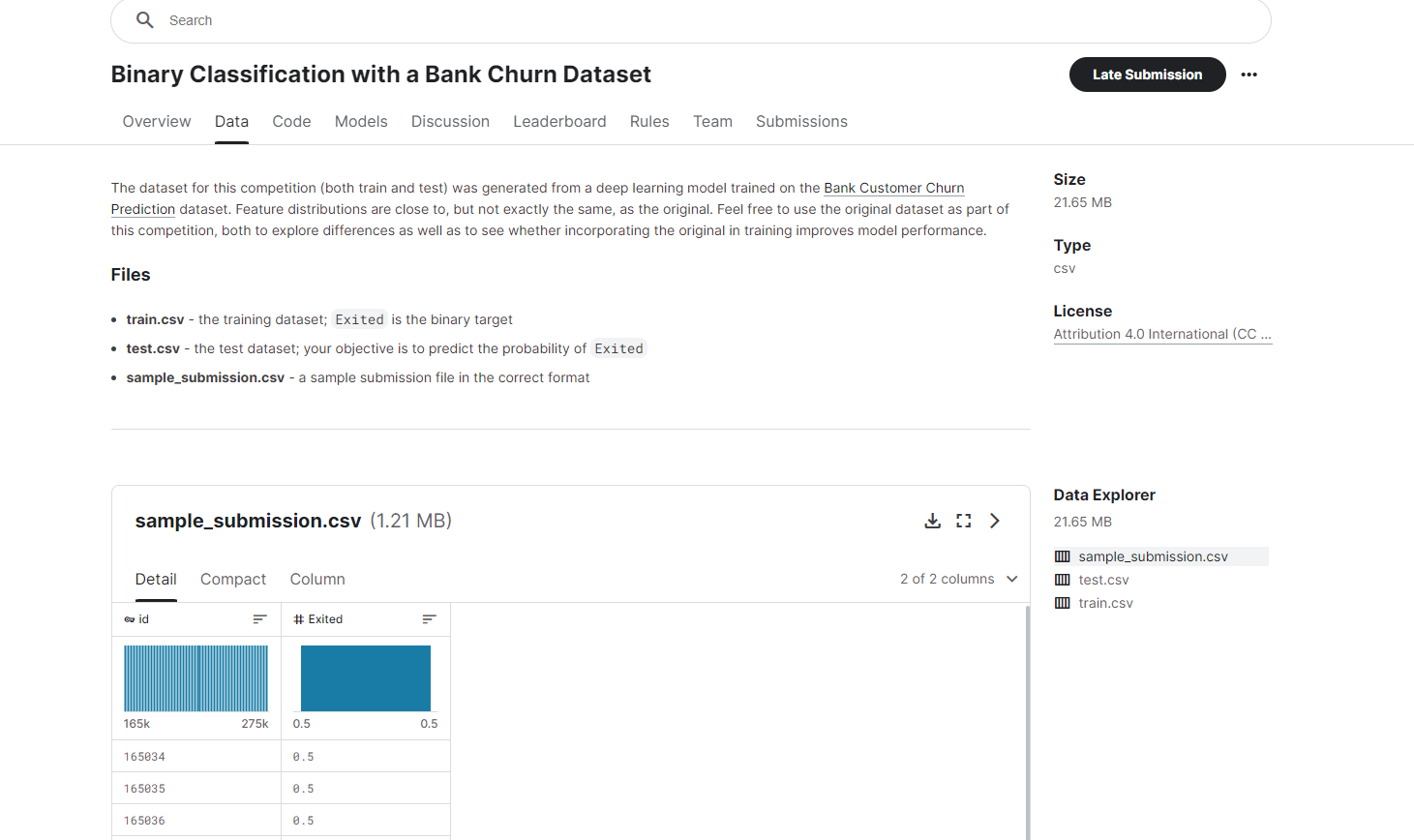

After clicking on “Late Submission”, I need to grab the URL. This time, I’m using the Binary Classification with a Bank Churn Dataset. The complete URL is: https://www.kaggle.com/competitions/playground-series-s4e1/overview

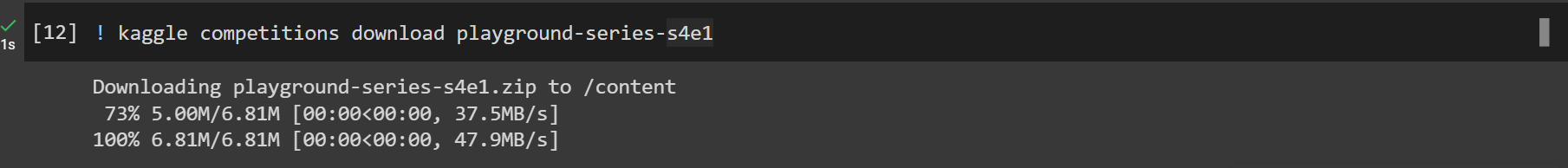

From the URL, I can see that the dataset is located at “playground-series-s4e1”. So I will use the following command to download the dataset to my Google Colab notebook:

! kaggle competitions download playground-series-s4e1

That’s it! 😊

How to Download a Specific File from a Kaggle Competition Dataset

Let’s say, I want to download a specific file from a Kaggle competition dataset. I can also do that.

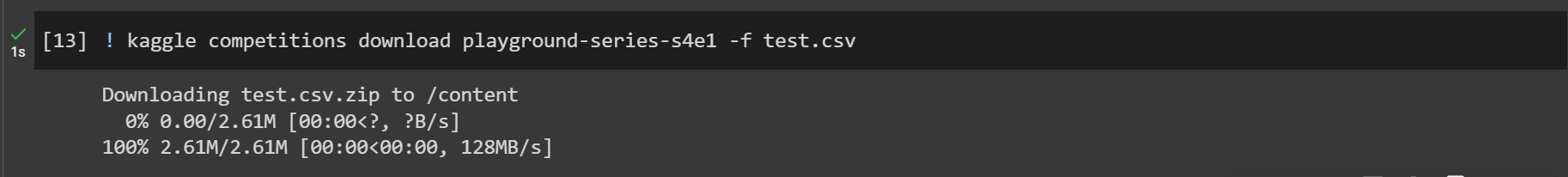

In the dataset used above, you can see that there are 3 files. Let’s say I want to download the test.csv file only.

To do this, the command would be strucutred like this: ! kaggle competitions download dataset-path -f file_name_with_extension.

So my command would be:

! kaggle competitions download playground-series-s4e1 -f test.csv

That’s it! 😊

Conclusion

I hope you have gained some valuable insights from the article.

If you have enjoyed the procedures step-by-step, then don’t forget to let me know on Twitter/X or LinkedIn.

You can follow me on GitHub as well if you are interested in open source. Make sure to check my website (https://fahimbinamin.com/) as well!

If you like to watch programming and technology-related videos, then you can check my YouTube channel, too. You can also check my other writings on Dev.to.

All the best for your programming and development journey. 😊

You can do it! Don’t give up, never! ❤️

[ad_2]

Source link